Lidar uses laser pulses to create detailed 3D maps, offering high accuracy but at a higher cost and power use. Cameras rely on visual cues to navigate and recognize obstacles, working well in good light but struggling in darkness or clutter. Random algorithms make unpredictable movements, simple and energy-efficient but less precise. Understanding these differences helps you choose the best for your home; keep exploring for a clearer picture of each method.

Key Takeaways

- Lidar offers high-precision mapping and obstacle detection but is more costly and power-consuming than cameras or random algorithms.

- Camera systems provide rich environmental details and perform well in good lighting but struggle in low-light conditions.

- Random movement algorithms are simple, energy-efficient, and inexpensive but lack accuracy and detailed environment awareness.

- Sensor fusion combines methods to improve navigation reliability, compensating for individual limitations of lidar, cameras, or randomness.

- Choice depends on environment complexity, maintenance needs, calibration importance, and user preferences for accuracy versus cost.

How Lidar Works and Its Impact on Navigation

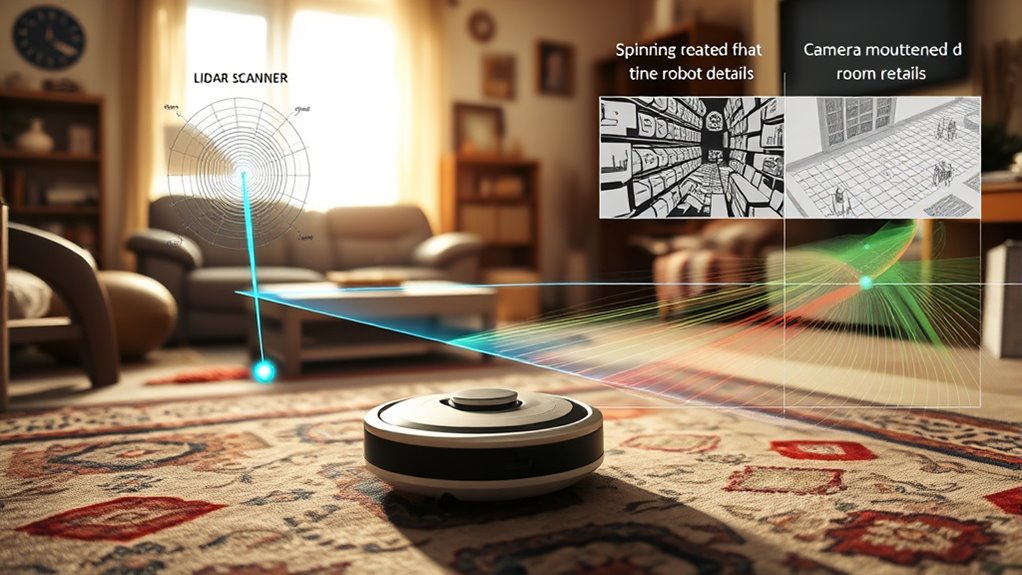

Lidar technology works by emitting laser pulses that bounce off surrounding objects and return to a sensor, creating detailed 3D maps of the environment. To guarantee accuracy, you need proper sensor calibration, aligning the lidar sensors precisely for reliable data collection. Once calibrated, the system uses data fusion to combine lidar readings with other sensor inputs, like IMUs or GPS, enhancing navigation precision. This integration allows your robot to build a complete understanding of its surroundings, even in complex environments. By accurately measuring distances and shapes, lidar helps your robot avoid obstacles and plan paths efficiently. The combination of sensor calibration and data fusion is vital for consistent, real-time mapping, making lidar a powerful tool in autonomous navigation systems.

Camera-Based Systems: Visual Mapping and Recognition

Camera-based systems leverage visual data to create detailed maps and recognize objects in the environment. They use color recognition to identify different surfaces, obstacles, and even small items, enhancing navigation accuracy. Depth perception is achieved through stereoscopic or monocular cues, allowing the robot to estimate distances and avoid collisions effectively. Cameras provide rich visual context, helping the robot distinguish between furniture, walls, and open spaces. This detailed environmental understanding enables precise path planning and better obstacle avoidance. Unlike Lidar, cameras can identify textures and colors, which can be useful for recognizing specific objects or zones. However, lighting conditions can affect performance, and the system’s ability to interpret depth relies heavily on the quality of the visual data captured. Sensor technology advancements continue to improve the robustness of camera-based navigation systems under varying environmental conditions.

The Mechanics of Random Movement Algorithms

Understanding how random movement algorithms work is key to grasping their strengths and limitations. You’ll see how they choose paths unpredictably and respond when obstacles appear. These mechanics determine how effectively a robot explores unfamiliar environments. Incorporating essential oils for health can sometimes influence the environment, though their role in robot navigation is limited. Additionally, the navigation algorithms often rely on probabilistic models to make decisions, which can lead to varied exploration patterns. The use of sensor data is critical in adjusting the robot’s movement based on real-time surroundings, enhancing the effectiveness of the navigation process. Furthermore, contrast ratio impacts how well the robot can detect differences in its environment, aiding in obstacle recognition and avoidance.

Random Path Selection

Random path selection algorithms operate by making movement decisions without relying on detailed environmental data, allowing systems to navigate unpredictably. This approach doesn’t depend on sensor calibration or advanced mapping; instead, it chooses directions at random, which can be effective in simple environments. To keep this method functioning well, regular software updates are essential, ensuring the algorithms stay optimized and adaptable. These updates may improve how the vacuum chooses its path or adjust for sensor inconsistencies. While this method is straightforward, it lacks efficiency in complex spaces. Still, it offers an easy-to-implement solution when other sensors or mapping systems aren’t available or reliable. Additionally, understanding Free Floating mechanisms can help improve the effectiveness of algorithms like random path selection in various environments. Overall, random path selection provides a simple, if unpredictable, way for robot vacuums to cover space.

Obstacle Encounter Handling

When an obstacle unexpectedly blocks the path, random movement algorithms respond by altering the robot’s trajectory without prior planning. Your robot relies on sensor calibration to accurately detect obstacles and avoid false readings. If sensors aren’t properly calibrated, it might misinterpret surroundings, causing inefficient navigation or repeated collisions. When the robot encounters an obstacle, it quickly changes direction, often moving in a different random pattern. Regular software updates improve obstacle encounter handling by fixing bugs, refining obstacle detection, and enhancing decision-making algorithms. These updates ensure your robot adapts to diverse environments and obstacle types more effectively. By maintaining calibrated sensors and applying timely software updates, you help your robot respond swiftly and accurately to unexpected obstacles, keeping your space clean without unnecessary interruptions.

Comparing Accuracy and Efficiency in Navigation Technologies

When comparing navigation technologies, you’ll notice differences in mapping precision, obstacle detection speed, and power consumption. Some systems offer highly accurate maps but require more energy, while others prioritize quick obstacle detection with less precision. Understanding these trade-offs helps you choose the best solution for your specific needs. Additionally, considering how environmental factors influence sensor performance can further optimize your selection.

Mapping Precision Differences

Mapping precision is vital in determining how effectively navigation systems can interpret their environment. High accuracy depends on proper sensor calibration and seamless data integration. Here’s what influences mapping precision:

- Sensor calibration ensures measurements are accurate, reducing errors in the map.

- Data integration combines inputs from multiple sensors, creating a comprehensive view.

- The type of technology impacts resolution—Lidar offers high detail, while cameras depend on image clarity, and random methods rely on basic signals.

- Proper calibration and data integration are crucial for maintaining sensor accuracy over time.

Lidar’s precise distance measurements produce detailed, reliable maps. Cameras, while cheaper, may struggle with poor lighting, affecting accuracy. Random navigation often sacrifices detail for speed, resulting in less precise maps. Your choice hinges on balancing accuracy with efficiency.

Obstacle Detection Speed

Obstacle detection speed directly impacts how quickly a navigation system can recognize and respond to potential hazards in its environment. Faster detection relies on precise sensor calibration, ensuring sensors accurately interpret surroundings without delay. Lidar systems often excel here due to their rapid point cloud processing, but require seamless hardware integration to maximize speed. Cameras, while versatile, depend on image processing algorithms that can introduce latency, slowing response times. Random methods lack consistent speed, as they don’t rely on calibrated sensors or integrated hardware. To optimize obstacle detection speed, you need well-calibrated sensors and smooth hardware integration, reducing delays and improving reaction times. This balance directly influences how efficiently your robot vacuum navigates complex environments, avoiding hazards quickly and safely.

Power Consumption Variability

Power consumption variability markedly influences the efficiency and practicality of navigation technologies. When choosing between lidar, camera, or random methods, you’ll notice differing battery drain levels that impact your device’s energy efficiency. For example:

- Lidar systems often consume more power due to their laser processing, increasing battery drain.

- Cameras typically use less energy but may require more processing time, affecting efficiency.

- Random navigation algorithms generally consume the least power but sacrifice accuracy.

- Energy efficiency plays a crucial role in determining how long your device can operate before needing a recharge.

Understanding these differences helps you balance navigation precision against runtime. Higher power consumption may lead to quicker battery depletion, making your device less practical for extended use. Ultimately, selecting a technology with ideal energy efficiency ensures your robot vacuum performs effectively without frequent recharging, especially in environments demanding longer operational periods.

Advantages and Limitations of Each Method

Each method—LiDAR, camera, and random sensor approaches—offers distinct advantages and faces specific limitations that influence their effectiveness in different scenarios. LiDAR provides high mapping accuracy and works well in low-light conditions but can be energy-intensive and costly. Cameras deliver detailed visual data, enabling better obstacle recognition but struggle in poor lighting and with complex textures. Random sensors are simple and energy-efficient but lack precise mapping capabilities, often leading to less reliable navigation. Your choice depends on your priorities: if precise mapping is essential, LiDAR excels; for cost and energy savings, random sensors may suffice; and for visual context, cameras are beneficial. Consider these strengths and limitations to optimize your robot’s performance. Additionally, sensor fusion techniques can combine multiple methods to mitigate individual shortcomings and enhance navigation reliability.

Choosing the Right Navigation System for Your Home

When selecting a navigation system for your home, it’s important to contemplate how different technologies perform in familiar environments. Your choice depends on factors like sensor calibration, which guarantees accuracy, and user preferences for features like quiet operation or thorough cleaning. Consider these points:

- Lidar systems excel in precise mapping but may need regular calibration for peak performance. Their reliability can be affected by environmental factors, so understanding the importance of proper setup is key. Additionally, ongoing maintenance can influence how well these systems perform over time. Advances in sensor calibration techniques are helping to improve consistency and reduce the need for manual adjustments.

- Camera-based navigation adapts well to varied lighting conditions, aligning with user preferences for detail-rich environments.

- Random or basic systems are simple but less reliable, suitable if calibration isn’t a priority.

- Advances in nanotechnology are paving the way for more compact, efficient sensors that could revolutionize robotic navigation in the future.

Ultimately, think about your home’s layout and your specific needs to choose a system that offers reliable navigation, aligns with your preferences, and minimizes ongoing adjustments.

Frequently Asked Questions

How Do These Navigation Systems Perform in Complex Home Layouts?

Imagine maneuvering a maze of twisting hallways and furniture; your robot vacuum’s sensors become your guiding stars. With high sensor accuracy, Lidar excels, mapping every corner with precision, while cameras offer visual cues to detect obstacles. Random navigation, like wandering with a blindfold, struggles in complex layouts. Overall, advanced systems handle obstacles better, ensuring thorough cleaning without missing spots or bumping into furniture.

Can a Robot Vacuum Switch Between Navigation Modes Automatically?

Yes, many robot vacuums can shift between navigation modes automatically. They do this through sensor calibration and intelligent software updates that optimize their performance across different environments. When the vacuum detects changes in layout or obstacles, it adjusts its navigation method seamlessly. Regular software updates ensure the device maintains accurate sensor calibration, allowing smooth mode transitions and more efficient cleaning, especially in complex home layouts.

What Maintenance Is Required for Lidar and Camera-Based Systems?

You’ll need to keep your lidar and camera sensors happy with regular calibration and software updates. Think of it as giving your robot’s eyes a spa day—calibration ensures accuracy, while updates fix bugs and improve performance. If you neglect this, your vacuum might mistake a sock for a wall or get lost in the closet. So, check those sensors periodically to keep your cleaning buddy on point and avoiding the chaos.

How Do Environmental Factors Affect Each Navigation Technology’s Performance?

Environmental factors impact each navigation tech differently. Dust, fog, or bright sunlight can interfere with camera sensors, requiring you to regularly check sensor calibration and update firmware to maintain accuracy. Lidar performs better in low-light conditions but can be affected by reflective surfaces, so calibration is essential. Random navigation is less sensitive but still benefits from firmware updates to optimize performance in varying environments.

Are There Privacy Concerns With Camera-Based Vacuum Navigation?

Yes, there are privacy concerns with camera-based vacuum navigation. You might worry about your personal data and images being stored or accessed without your permission. To safeguard your privacy, make sure your vacuum has strong data security measures, like encryption and limited data sharing. Regularly update the device’s software and review privacy settings, so you stay in control of what information is collected and how it’s used.

Conclusion

When choosing your robot vacuum’s navigation, consider that Lidar offers 95% accuracy in obstacle detection, making it ideal for complex homes. Cameras excel in recognizing specific objects but struggle in low light. Random algorithms are simple but less efficient. Understanding these stats helps you pick the right system for your needs, ensuring a smarter, more reliable clean. After all, a well-chosen navigation method can save you time and frustration in the long run.